Vega 20 managed 73Mhz before it was further reduced and twice the HBM2 stacks from going from 14nm to 7nm while still being a 300W toaster. Plus it's cost went up significantly so even at 700$ it was sold with 100-200$ loss.. The biggest problem with lower nodes is that they cost more transistor for transistor after 14nm. So even plain shrinks increase cost.

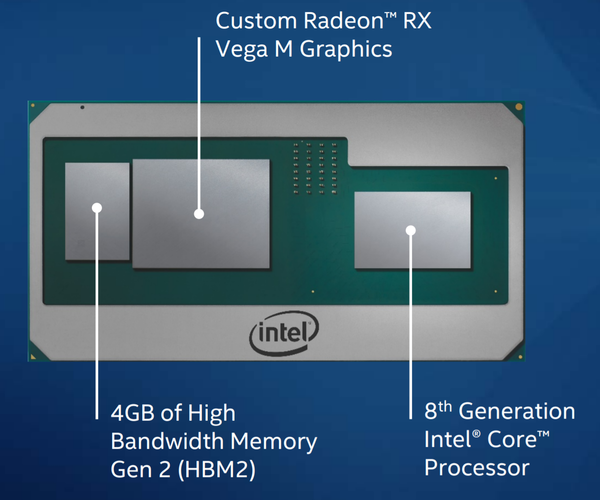

Going OT, AMD want a slice of Intels iGPU market with the Ryzen 2xxxG line. Smaller sizes means they can then pack in a low power GPU along with with more CPU cores. Thats why AMD want to go smaller.

The 1660 didn´t have anything to do with AMD but to make Nvidia users upgrade. It´s not a static marked you know.

The 1660 is the sweet spot where GPU vendors make money, hitting AMD where they have the value cards. They see AMD starting to gain traction and they strip away the dubious value bits.

Not that node names have any relevance anymore, it´s pure PR renamed whenever needed. TSMC 20nm node for example managed to become a 12nm node. The last time nodes had any meaningful meaning was 20 years ago. Today you have to look at the actual density and electrical properties. Else its just Samsung bananas vs TSMC mangos vs Intel pineapples. TSMC also just this week renamed a 7nm node to 6nm because...Samsung had PR slided a 5nm node and TSMC had already used that number

Like I said, the trend is for AMD to cram more into a CPU / APU and compete with Intel on volumes. The future for them is custom integrated.

Sony is also slowly preparing people for that the PS5 will cost quite more than PS4.

Every recent console launch is the same, and then they'll pare back the next model trying to trim the excesses of the first model.